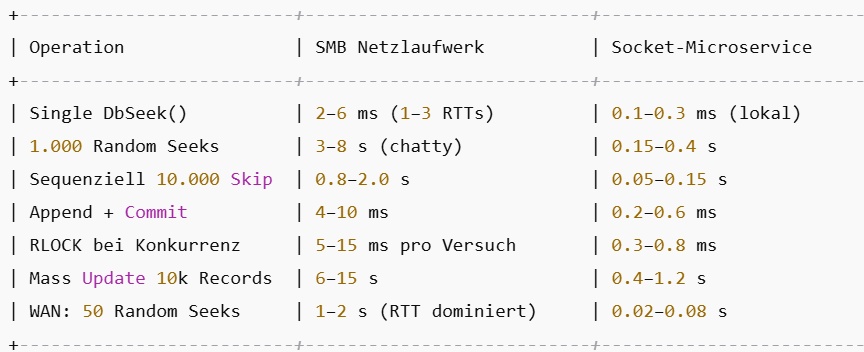

In classic DBF applications, clients access the data through a network drive (X:\ or \\Server\Share\...). That means every record read, write, and lock request goes across SMB:

Each DbSeek(), DbSkip(), DbGoTo() = multiple SMB roundtrips.

Each FLOCK()/RLOCK() = extra SMB traffic to negotiate byte-range locks.

Performance degrades quickly with latency or many users, because each client is “chatty” over the network.

With a socket-based microservice:

Only one process on the server opens the DBF files locally.

All client requests are sent once over a socket (TCP/HTTP/WebSocket) and executed inside the server.

This reduces network traffic drastically: clients send “give me record 123”, and only the result (JSON, binary) comes back.

Fewer roundtrips, less chatter → much faster, especially on WAN/VPN connections.

In short:

SMB = high chatter, lock negotiation across the network, slows down as users grow.

Socket service = one local handle, single disk I/O, network only carries compact requests/results → often 5–10× faster in real scenarios.