Hello friends,

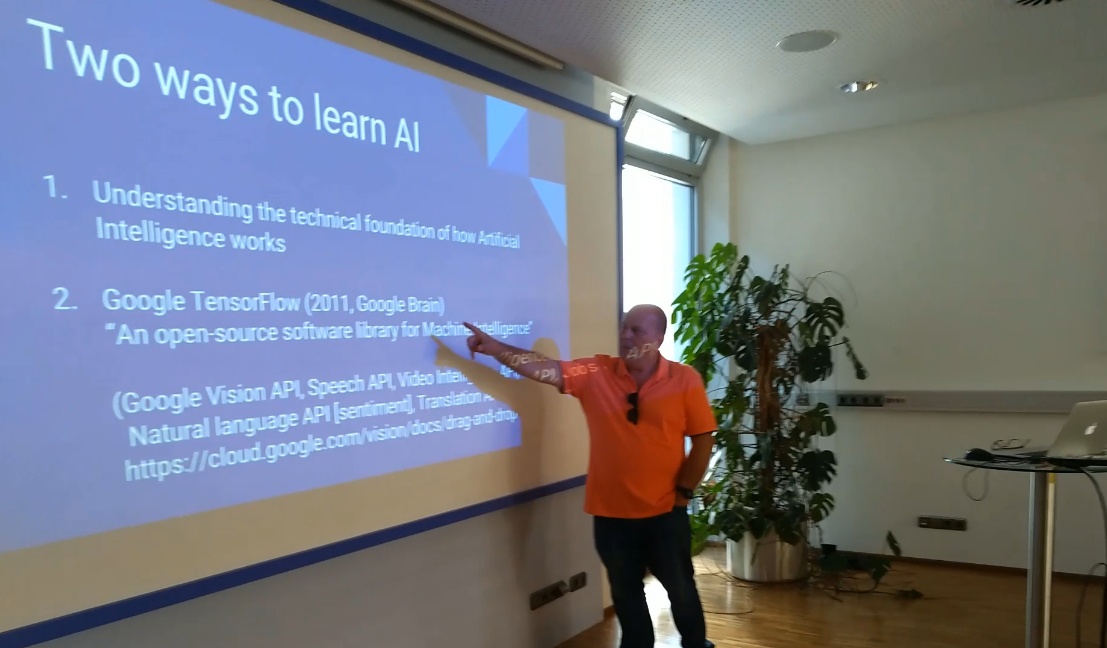

Many years after the first AI examples presented by Antonio Linares in the FiveWin environment, it becomes clear how valuable these early approaches actually were. Especially the simple examples based on perceptrons are excellent for truly understanding artificial intelligence—often better than modern, highly abstracted AI tools that hide many of the underlying mechanisms.

These examples make visible the foundations on which today’s AI systems are built: neurons, weights, activation functions, forward propagation, error calculation, backpropagation, and learning rate. Anyone who has worked through these concepts gains a much clearer mental model than the majority of today’s AI users.

For us as programmers, this understanding is particularly important. Not to build AI systems ourselves, but to realistically assess how AI learns, where its limits are, and why it can sometimes be convincingly wrong. For this reason, I have put together a learning plan that starts exactly at this level, which I am happy to share here.

1st FWH + [x]Harbour 2017 international conference - https://forums.fivetechsupport.com/viewtopic.php?t=33515

Finally, a sincere thank-you to Antonio for his research and development work over the years, and for sharing these ideas with our community—it has had a lasting impact and remains highly relevant today.

Best regards,

Otto

Internal Training Document

Fundamental Understanding of Artificial Intelligence (AI) for Application Developers

Target audience:

Application developers, technical staff, IT-oriented professionals

Prerequisites:

Basic programming knowledge

(variables, loops, classes, functions)

Training goal:

To develop a realistic, technical understanding of AI in order to use it

safely, effectively, and independently in daily work.

---

1. What AI is – and what it is not

1.1 What AI is not

- No thinking

- No consciousness

- No genuine understanding

- No “knowledge” in the human sense

AI does not decide — it computes probabilities.

---

1.2 What AI actually is

A statistical pattern recognition system

trained on many examples

to determine which output best fits a given context.

AI does not work with rules like classical programs, but with weights.

---

2. The core principle: learning through weight adjustment

To explain this, we use a simplified perceptron model

(implemented in Harbour/FiveWin).

2.1 Simplified perceptron – core idea

nSum += aInputs[ n ] * ::aWeights[ n ]An artificial neuron does exactly one thing:

Input × weight → summed value

The result is just a number — nothing more.

---

2.2 Learning through correction

if nSum < nExpectedResult

::aWeights[ 1 ] += 0.1

endif

if nSum > nExpectedResult

::aWeights[ 1 ] -= 0.1

endifThe learning rule is simple:

- Result too small → slightly increase the weight

- Result too large → slightly decrease the weight

That is learning.

No rules, no logic, no understanding.

---

2.3 Key insight

The model does not know why the result is wrong.

It only knows that it is wrong.

This principle applies to all neural networks, including modern AI systems.

---

3. The limits of this model (intentionally)

The perceptron:

- does not recognize mathematical rules

- does not understand meaning

- only approximates values

These limitations are not flaws — they are didactically essential.

Anyone who understands these limits will not overestimate AI.

---

4. Bridge to modern AI systems (LLMs)

4.1 What is different in modern AI models

| Perceptron | Modern AI (LLM) |

|---|---|

| 1 weight | Billions of weights |

| One number | High-dimensional vectors |

| Explicit target values | Probabilities |

| Local training | Training on massive datasets |

4.2 What remains the same

The learning principle is identical:

Small adjustments → better statistical fit

A Large Language Model (LLM) is not a different kind of entity,

but an extreme scaling of the same principle.

---

5. Why AI appears “intelligent”

AI has learned:

- how explanations are structured

- how technical texts are written

- what plausible answers look like

However, AI does not verify whether something is true, correct, or applicable.

AI optimizes plausibility, not truth.

---

6. Why prompting works

A prompt:

- provides context

- shifts probabilities

- guides the model in a specific direction

Prompting is not magic — it is:

temporary context training

The clearer the context, the more stable the output.

---

7. Proper use of AI in everyday work

7.1 Suitable use cases

- Explanations

- Summaries

- Structuring text

- Idea generation

- High-level code reviews

- Documentation support

7.2 Critical use cases

- Numbers and calculations

- Legal statements

- Factual claims

- System-specific assumptions

- Business decisions

AI provides suggestions — not guarantees.

---

8. Core principles to remember

- AI does not understand — it adapts

- AI does not think — it evaluates probabilities

- Good prompts do not replace expertise

- Poor input produces convincing nonsense

- Humans remain responsible

---

9. Closing statement of the training

Artificial intelligence is not a replacement for thinking,

but an amplifier of clarity.

Unclear thinking produces well-phrased nonsense.

---

10. Recommended next steps (optional)

- Deliberate experimentation with prompts

- Critical evaluation of results

- Using AI as a tool, not an authority

- Periodic refresh of technical fundamentals

---

Document status

Internal technical training document

No marketing content, no product references, no certification focus