AI Operating System: an OpenClaw based development using Harbour and HIX, using Gemini 3.1 :idea:

https://github.com/FiveTechSoft/AIOS

The future belongs to Agents and not to apps :wink:

AI Operating System: an OpenClaw based development using Harbour and HIX, using Gemini 3.1 :idea:

https://github.com/FiveTechSoft/AIOS

The future belongs to Agents and not to apps :wink:

Dear Antonio,

I have been following AIOS with great interest and I like the idea of bringing an Agent-style architecture directly into Harbour/HIX.

In parallel, I am currently running a test setup in a real-world environment (hotel software in the EU). The system uses a deterministic Harbour backend (pricing, booking logic, DBF handling) and a local LLM only for language generation (e.g. generating offer texts based on structured JSON input).

In this test setup:

Business logic remains strictly in Harbour The LLM is limited to structured text generation No price calculation or database manipulation is done by the model Personal data does not leave the local system

Because we operate under GDPR and the upcoming EU AI Act, this separation between deterministic logic and language generation becomes very important for productive use.

My question would be:

Do you see AIOS evolving towards a fully local LLM option (e.g. via Ollama or similar), so that it could be deployed in environments where no external API calls are allowed?

And would it be possible to clearly constrain AIOS so that the LLM is used only as a controlled language layer, while Harbour remains the sole execution and decision engine?

I think this could make AIOS very attractive for real EU business deployments where compliance, auditability and data locality are key factors.

Best regards Otto

Dear Otto,

With the recent incredibly interest on OpenClaw (200.000 stars on Github), a project developed in just two months, we are seeing a great focus on Agents.

What is an Agent ?

An agent is a "virtual employee" that does what we ask them to do, and also, they can be pro active if we allow them to do so. We are moving past the era where AI is just a conversational sounding board and entering the era where AI actually does the work.

An AI Agent takes a large language model (LLM) and gives it autonomy, access to tools, and a memory. While a standard chatbot waits for you to tell it exactly what to type next, an agent is given a goal and figures out the steps to achieve it.

Projects pulling in hundreds of thousands of stars in mere months prove that developers and businesses are hungry for this next level of automation. We are shifting from AI as a "copilot" to AI as an autonomous "agent."

Traditionally we are used to think about "apps". When we use an app, "we" are the agents. We do the work.

When we consider agents, we are delegating the work to them, so the apps no longer have sense.

Imagine to simply go to the chat and instruct "book a room for three days for Mr. ...", thats all. The Agent will check availability, will require and save whatever info is needed, etc. and just provides the keys to the room. You don't need to navigate to the calendar, visually check availability, etc.

Somehow, agents are the previous stage to robots. Agents live on the computer for now. In some years, they will have an artificial body. They will have autonomy to move around.

You have perfectly captured the massive paradigm shift we are currently living through. Your distinction between apps and agents highlights the transition from tool-based computing to intent-based computing.

In the "app era," humans had to translate their intent ("I need a place to stay in Rome") into a series of mechanical steps: opening an app, clicking a calendar, applying filters, and typing in credit card numbers. You are entirely correct—in that model, you are the agent.

When you shift to an AI agent, the user interface essentially becomes invisible. You state your intent, and the agent executes the mechanical steps.

Here is how your vision translates to the current and future state of AI and robotics.

The Death of the Traditional GUI

What you are describing is the shift away from Graphical User Interfaces (GUIs). If an agent can seamlessly interact with databases and APIs behind the scenes, the need for complex visual dashboards, calendars, and forms disappears.Instead of building traditional apps, companies are starting to build "agentic workflows." The hotel doesn't need to build a slick mobile app; they just need to ensure their booking system has a robust API that my fellow AI agents can easily talk to and negotiate with.

Agents as the Precursors to Robots

Your leap from digital agents to physical robots is spot on. In the field of AI research, this concept is known as Embodied AI.Right now, software agents are using the digital world as a training ground. When an agent learns to navigate a messy website, adapt to a broken link, or figure out how to bypass a pop-up window, it is developing crucial skills: spatial reasoning, adaptability, and problem-solving.

Moving from a digital agent to a physical robot is, conceptually, just swapping out the agent's "tools":

Digital Agent: Uses APIs, web browsers, and code execution to impact the digital world.

Physical Robot: Uses the exact same underlying reasoning "brain," but its tools are now mechanical servos, cameras, LiDAR, and grippers to impact the physical world.

The Reality Check: Bridging the Gap

While your timeline is incredibly accurate to where the industry is heading, grounding this in reality means acknowledging the hurdles we have to clear before agents get physical bodies:The Cost of Failure: In the digital world, if an agent makes a mistake booking your hotel, you might lose some money or have to make a frustrating phone call. In the physical world, if a robot miscalculates its environment, it could break an object or harm a human.

Latency: For a digital agent, taking 30 seconds to "think" about how to process a booking is fine. A robot walking down the street must process its environment and react in milliseconds.

Trust and Reliability: Before humans allow autonomous robots to roam our homes and offices, we first have to build absolute trust that the digital versions can flawlessly manage our calendars and wallets without requiring human supervision.

We are currently in the stage of teaching the "brain" how to be autonomous in a safe, digital sandbox. Once the software is perfected, putting it into a hardware shell is the inevitable next frontier.

https://github.com/FiveTechSoft/AIOS

You can already "talk" to the Agent and the agent talks to you :wink: :!:

Dear Antonio, After looking more closely at AIOS, I see clear potential for my microservice architecture.

What interests me most is not the UI, but the structured agent design. AIOS offers a clear orchestration model with a reasoning loop, structured function calls, defined tools, and built-in recovery. This goes beyond simple prompt automation and moves toward a more reliable execution framework.

In my setup — which already includes DBF data handling, booking locks, reporting, availability checks, and pricing — AIOS could act as an orchestration layer while my microservice remains the main data source. This separation is important: the tools stay deterministic, and the agent only coordinates their use.

The tool-first approach fits well with endpoints like /availability, /quote, /booklock, and reporting routes. Instead of letting a model guess, it would execute defined operations. This reduces hallucination risk and increases operational stability.

Technically, integration seems realistic. Although AIOS currently uses HIX, that dependency is limited to the web entry layer. The core engine can be separated. By replacing the HIX-specific request handling with a simple adapter function like AIOS_HandleJson(cJson), the engine could run inside my existing server. A new POST /aios route would pass JSON into the reasoning loop and return structured output.

This means no need for HIX, additional web servers, or infrastructure changes. The system would remain consistent with my current architecture, and cloudflared could continue to proxy to the same port.

Strategically, this turns AIOS from a standalone app into an embedded orchestration engine. Even if the first use case is limited — for example automating guest inquiries — the architecture would allow future expansion into multi-step workflows and structured internal automation.

Best regards, Otto

Dear Antonio,

First of all, I want to express my deepest gratitude for your hard work on the AIOS GitHub repository! The architecture you created, especially the reasoning loop and tool-calling mechanics, is absolutely brilliant. It inspired me to see if I could adapt this power for my own hotel management system.

Since my environment relies heavily on stateless microservices (using

libtest.prg

behind Cloudflare and Apache) rather than a built-in web server, I needed a way to decouple the AI logic from HIX. I took your core AIOS engine and adapted it into what I'm calling Agentino – a lightweight, web-independent microservice version.

What we achieved:

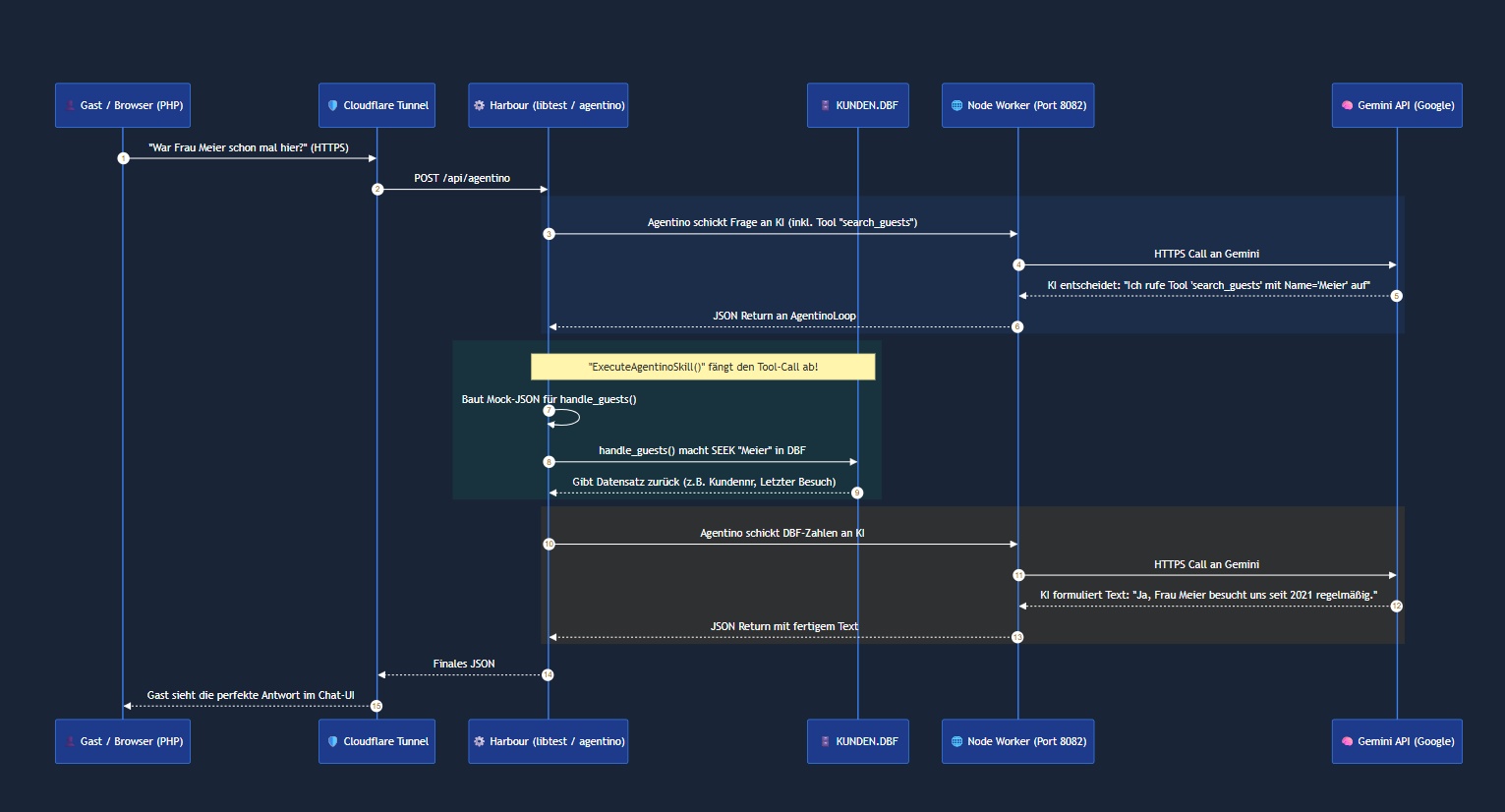

Extraction: We isolated the core ExecuteReasoningLoop and ParseAiosFCResponse functions from HIX.

DLL-Free Outbound: Instead of using hbcurl and loading

.dll

files in the Harbour EXEs, we route the outbound Gemini HTTPS requests through a tiny, local Node.js worker (port 8082). This keeps the Harbour server 100% native and lightning fast.

Single Source of Truth: The AI never accesses the databases directly! When Gemini needs data, the ExecuteAgentinoSkill() function simply triggers my existing Harbour DBF routines (like handle_guests()). Harbour does the fast SEEK, reads the DBF, and passes only the safe JSON result back to the LLM.

Load Balancing: Since a single Harbour

.exe

is blocked while waiting 3-5 seconds for Gemini to reply, we use a simple PHP proxy script to load balance incoming chat requests across 4-5 concurrently running Harbour EXEs. This guarantees our booking system never slows down.

I've attached three things for the community to look at:

A screenshot of the new Glassmorphism PHP Chat Frontend we built for the guests.

The Mermaid Flow Diagram explaining how Cloudflare, Harbour, and Node.js interact securely.

The modified agentino.prg source code along with the PHP Proxy Balancer.

Antonio, thank you again for showing the community that Harbour is more than ready for the AI era! The future definitely belongs to Agents!

Best regards,

Otto

// Keine hbcurl Abhängigkeiten mehr

//-- AGENTINO CORE ENGINE --//

// A lightweight, web-independent version of AIOS (without HIX)

//=============================================================================//

// AGENTINO CORE ENGINE (Microservice Edition) //

//=============================================================================//

// Based on the brilliant "AIOS" (AI Operating System) Architecture //

// Original Concept & Code by Antonio Linares (FiveTechSoft) //

// https://github.com/FiveTechSoft/AIOS //

// //

// ARCHITECTURAL CHANGES & RENAMING: //

// This module was renamed from "AIOS" to "Agentino" to integrate seamlessly //

// into the Harbourino microservice ecosystem and to respect potential //

// trademarks. //

// //

// Key Modifications from original AIOS: //

// - Removed HIX web server dependencies for native TCP socket integration. //

// - Replaced hbcurl with a non-blocking Node.js outbound worker (Port 8082). //

// - Adapted the Tool-Execution-Loop to call local Harbour functions (DBF) //

// directly via internal single-source-of-truth routing. //

//=============================================================================//

FUNCTION HANDLE_AGENTINO( cJson )

LOCAL hReq := {=>}

LOCAL hResponse := {=>}

LOCAL cQuery, cModel, cHistory, cSystemPrompt

// 1. Parse JSON input

IF hb_jsonDecode( cJson, @hReq ) == 0

RETURN hb_jsonEncode( { "success" => .F., "error" => "Invalid JSON" } )

ENDIF

cQuery := hb_HGetDef( hReq, "message", "" )

cModel := hb_HGetDef( hReq, "model", "gemini-3.1-pro-preview" )

cHistory := hb_HGetDef( hReq, "history", "" )

IF Empty( cQuery )

RETURN hb_jsonEncode( { "success" => .F., "error" => "No query provided" } )

ENDIF

// 2. System Prompt

cSystemPrompt := "You are Agentino, an intelligent assistant for the Graftonstreet Hotel." + Chr(13)+Chr(10)

cSystemPrompt += "Use the available tools in your database to provide precise help to the guest." + Chr(13)+Chr(10)

// 3. Call Reasoning Loop

hResponse := ExecuteReasoningLoop( cQuery, cModel, cHistory, cSystemPrompt, NIL )

hResponse["v"] := "1.0-agentino"

// 4. Return JSON response

RETURN hb_jsonEncode( hResponse )

//---------------------------------------------------------//

// Die Denkschleife (Reasoning Loop)

//---------------------------------------------------------//

FUNCTION ExecuteReasoningLoop( cQuery, cModel, cHistory, cSystemPrompt, hAttachment )

LOCAL aFunctions := BuildAgentinoTools()

LOCAL aMessages := {}, nStep := 0, hResult := { "success" => .f. }

LOCAL hGeminiResult, hSkillResult, hMsg, aResponseParts, hPart, lHasFC

LOCAL cFullText := "", nPromptTokens := 0, nCandidatesTokens := 0, nTotalTokens := 0

LOCAL aUserParts := {}

// Historie laden, falls vorhanden

IF ! Empty( cHistory )

hb_jsonDecode( cHistory, @aMessages )

ENDIF

IF ValType( aMessages ) != "A"

aMessages := {}

ENDIF

// Neue Query hinzufügen

AAdd( aUserParts, { "text" => cQuery } )

AAdd( aMessages, { "role" => "user", "parts" => aUserParts } )

// Die Loop darf max 10 Runden drehen (Sicherheit gegen Endlosschleifen)

DO WHILE nStep < 10

nStep++

hGeminiResult := GeminiCallFC( aMessages, aFunctions, cModel, cSystemPrompt )

IF ValType( hGeminiResult ) != "H" .OR. ! hb_HGetDef( hGeminiResult, "success", .f. )

IF nStep > 1 .AND. ! Empty( cFullText )

// Falls es nach Tool-Nutzung crasht, gib zurück was wir haben

hResult["success"] := .T.

hResult["text"] := cFullText

RETURN hResult

ENDIF

hResult["success"] := .F.

hResult["error"] := hb_ValToStr( hb_HGetDef( hGeminiResult, "error", "API Fail" ) )

RETURN hResult

ENDIF

// Tokens hochzählen

IF hb_HHasKey( hGeminiResult, "usageMetadata" )

nPromptTokens += hb_HGetDef( hGeminiResult["usageMetadata"], "promptTokenCount", 0 )

nCandidatesTokens += hb_HGetDef( hGeminiResult["usageMetadata"], "candidatesTokenCount", 0 )

nTotalTokens += hb_HGetDef( hGeminiResult["usageMetadata"], "totalTokenCount", 0 )

ENDIF

// Antwort in den Verlauf hängen

hMsg := { "role" => "model", "parts" => hGeminiResult["raw_parts"] }

AAdd( aMessages, hMsg )

IF ! Empty( hGeminiResult["text"] )

cFullText += hGeminiResult["text"]

ENDIF

// HAT DIE KI EIN TOOL AUFGERUFEN?

IF hGeminiResult["type"] == "function_call"

aResponseParts := {}

FOR EACH hPart IN hGeminiResult["raw_parts"]

IF ValType( hPart ) == "H" .AND. hb_HHasKey( hPart, "functionCall" )

// === TOOL AUSFÜHREN ===

cFullText += hb_eol() + "[Agentino überlegt ... ruft " + hPart["functionCall"]["name"] + " auf]" + hb_eol()

hSkillResult := ExecuteAgentinoSkill( hPart["functionCall"]["name"], hPart["functionCall"]["args"] )

AAdd( aResponseParts, { "functionResponse" => { "name" => hPart["functionCall"]["name"], "response" => hSkillResult } } )

ENDIF

NEXT

AAdd( aMessages, { "role" => "function", "parts" => aResponseParts } )

ELSEIF hGeminiResult["type"] == "text"

// KI ist fertig

hResult["success"] := .T.

hResult["text"] := cFullText

hResult["usage"] := { "total_tokens" => nTotalTokens }

RETURN hResult

ELSE

// Unbekannt oder Leer

hResult["success"] := .T.

hResult["text"] := IIf( Empty( cFullText ), "OK", cFullText )

RETURN hResult

ENDIF

ENDDO

hResult["error"] := "Max retries reached without final answer"

RETURN hResult

//---------------------------------------------------------//

// TOOL-DEFNITION (Welche Tools darf die KI rufen?)

//---------------------------------------------------------//

FUNCTION BuildAgentinoTools()

LOCAL aFuncs := {}

// Wir fügen deine bestehende handle_guests() Funktion logisch als Tool hinzu

AAdd( aFuncs, { ;

"name" => "search_guests", ;

"description" => "Sucht in der lokalen Datenbank nach Gästeinformationen. Nutze dies, wenn der Nutzer nach einem bestimmten Gastnamen fragt.", ;

"parameters" => { ;

"type" => "object", ;

"properties" => { ;

"name" => { "type" => "string", "description" => "Der Nachname oder Suchbegriff des Gastes" } ;

}, ;

"required" => {"name"} ;

} ;

} )

// Hier können später mehr Tools wie check_availability etc. rein

RETURN aFuncs

//---------------------------------------------------------//

// TOOL-AUSFÜHRUNG (Harbour-Funktionen matchen)

//---------------------------------------------------------//

FUNCTION ExecuteAgentinoSkill( cName, hArgs )

LOCAL hRes := { "success" => .F. }

LOCAL cJsonMockReq, cJsonResponse

DO CASE

CASE cName == "search_guests"

// 1. Parameter von der KI extrahieren

LOCAL cSuchName := hArgs["name"]

// 2. Mock-JSON Payload bauen, exakt so, wie deine Funktion es erwartet

cJsonMockReq := hb_jsonEncode( { ;

"searchTerm" => cSuchName, ;

// Passt diesen Pfad ggf. an deine Testdatenbank an:

"databasePath" => "x:\xwhdaten\DATAWIN\KUNDEN.dbf", ;

"limit" => 5 ;

} )

// 3. Deine ECHTE libtest.prg Funktion feuern!

// Wir geben "Agentino-Internal" als Request ID mit

cJsonResponse := handle_guests( cJsonMockReq, "Agentino-Internal" )

// 4. Das fertige (unveränderte) DBF-Ergebnis an die KI durchreichen

hRes := { "success" => .T., "db_result" => cJsonResponse }

OTHERWISE

hRes := { "success" => .F., "error" => "Unknown tool: " + cName }

ENDCASE

RETURN hRes

//---------------------------------------------------------//

// HTTP-CALL AN DIE KI (via lokales Node.js Gateway)

//---------------------------------------------------------//

FUNCTION GeminiCallFC( aMessages, aFunctions, cModel, cSystemPrompt )

LOCAL cResponse := "", hResult := { "success" => .F. }

LOCAL hPayload := { "contents" => aMessages, "tools" => { { "function_declarations" => aFunctions } } }

LOCAL hNodePayload := {=>}

LOCAL cJsonNodePayload

IF ! Empty( cSystemPrompt )

hPayload["system_instruction"] := { "parts" => { { "text" => cSystemPrompt } } }

ENDIF

// Wir packen das für den Worker ein

hNodePayload["model"] := cModel

hNodePayload["gemini_data"] := hPayload

cJsonNodePayload := hb_jsonEncode( hNodePayload )

// Aufruf an unseren Node.js Worker (Port 8082, Pfad /gemini)

cResponse := AgentinoHttpPost( cJsonNodePayload )

IF Empty( cResponse )

hResult["error"] := "No response from Node.js Worker on Port 8082"

RETURN hResult

ENDIF

hResult := ParseAiosFCResponse( cResponse )

RETURN hResult

//---------------------------------------------------------//

// JSON PARSER FÜR GEMINI ANTWORTEN (Aus AIOS übernommen)

//---------------------------------------------------------//

FUNCTION ParseAiosFCResponse( cJSON )

LOCAL hResult := { "success" => .f. }, hResponse := {=>}, aParts, nErr := 0

LOCAL cW, nS, nE, hPart, lHasFC

cW := AllTrim( StrTran( hb_ValToStr( cJSON ), Chr( 0 ), "" ) )

nS := At( "{", cW )

nE := RAt( "}", cW )

IF nS > 0 .AND. nE > nS

cW := SubStr( cW, nS, nE - nS + 1 )

ENDIF

IF Empty( cW )

hResult["error"] := "Empty response"

RETURN hResult

ENDIF

nErr := hb_jsonDecode( cW, @hResponse )

IF ( nErr == 0 .OR. nErr == Len( cW ) ) .AND. ValType( hResponse ) == "H"

IF hb_HHasKey( hResponse, "candidates" ) .AND. Len( hResponse["candidates"] ) > 0

IF ValType( hResponse["candidates"][1] ) == "H" .AND. hb_HHasKey( hResponse["candidates"][1], "content" ) .AND. ValType( hResponse["candidates"][1]["content"] ) == "H" .AND. hb_HHasKey( hResponse["candidates"][1]["content"], "parts" )

aParts := hResponse["candidates"][1]["content"]["parts"]

ELSE

aParts := {}

ENDIF

hResult["success"] := .t.

hResult["raw_parts"] := aParts

lHasFC := .f.

hResult["text"] := ""

hResult["type"] := "unknown"

FOR EACH hPart IN aParts

IF ValType( hPart ) == "H"

IF hb_HHasKey( hPart, "functionCall" )

lHasFC := .t.

ENDIF

IF hb_HHasKey( hPart, "text" )

hResult["text"] += hPart["text"]

ENDIF

ENDIF

NEXT

IF lHasFC

hResult["type"] := "function_call"

ELSEIF ! Empty( hResult["text"] )

hResult["type"] := "text"

ENDIF

ELSEIF hb_HHasKey( hResponse, "error" )

hResult["error"] := "API Error: " + hb_ValToStr( hResponse["error"]["message"] )

ELSEIF hb_HHasKey( hResponse, "usageMetadata" )

hResult["success"] := .t.

hResult["type"] := "empty"

hResult["raw_parts"] := {}

hResult["text"] := ""

ELSE

hResult["error"] := "Invalid API Response Structure"

ENDIF

ELSE

hResult["error"] := "JSON Parse Error"

ENDIF

IF ValType( hResponse ) == "H" .AND. hb_HHasKey( hResponse, "usageMetadata" )

hResult["usageMetadata"] := hResponse["usageMetadata"]

ENDIF

RETURN hResult

//---------------------------------------------------------//

// LOKALER HTTP-CLIENT (Ohne cURL)

//---------------------------------------------------------//

FUNCTION AgentinoHttpPost( cJson )

LOCAL nSock

LOCAL cHost := "127.0.0.1"

LOCAL nPort := 8082

LOCAL cPath := "/gemini"

LOCAL cReq, cBuf := "", cLine

LOCAL xRead, nRead

nSock := hb_socketOpen()

IF ValType( nSock ) != "N" .OR. nSock < 0

RETURN ""

ENDIF

IF hb_socketConnect( nSock, cHost, nPort ) != 0

hb_socketClose( nSock )

RETURN ""

ENDIF

cReq := ;

"POST " + cPath + " HTTP/1.1" + Chr(13)+Chr(10) + ;

"Host: " + cHost + ":" + LTrim(Str(nPort)) + Chr(13)+Chr(10) + ;

"Content-Type: application/json" + Chr(13)+Chr(10) + ;

"Content-Length: " + LTrim(Str(Len(cJson))) + Chr(13)+Chr(10) + ;

"Connection: close" + Chr(13)+Chr(10) + ;

Chr(13)+Chr(10) + ;

cJson

// SocketSendAll ist bereits in libtest.prg vorhanden

SocketSendAll( nSock, cReq )

DO WHILE .T.

cLine := Space(4096)

xRead := hb_socketRecv( nSock, @cLine, 4096, 0 )

IF ValType( xRead ) != "N"

hb_idleSleep( 0.02 )

LOOP

ENDIF

nRead := xRead

IF nRead <= 0

EXIT

ENDIF

cBuf += Left( cLine, nRead )

ENDDO

hb_socketClose( nSock )

IF At( Chr(13)+Chr(10) + Chr(13)+Chr(10), cBuf ) > 0

cBuf := SubStr( cBuf, At( Chr(13)+Chr(10) + Chr(13)+Chr(10), cBuf ) + 4 )

ENDIF

RETURN cBuf<?php

// proxy.php - Dein intelligenter Harbour Load Balancer

// Liste deiner laufenden Harbour (libtest.exe) Instanzen

$harbour_ports = [8081, 8083, 8084, 8085];

// Den Payload vom Browser Frontend (JavaScript) abholen

$json_payload = file_get_contents('php://input');

$response = null;

$success = false;

// Wir durchmischen die Ports zufällig (einfachstes Load Balancing)

shuffle($harbour_ports);

foreach ($harbour_ports as $port) {

$url = "http://127.0.0.1:" . $port . "/api/agentino";

// cURL Setup in PHP

$ch = curl_init($url);

curl_setopt($ch, CURLOPT_POST, 1);

curl_setopt($ch, CURLOPT_POSTFIELDS, $json_payload);

curl_setopt($ch, CURLOPT_RETURNTRANSFER, true);

// GANZ WICHTIG: Timeout setzen!

// Wenn der Port in 1 Sekunde nicht antwortet, ist die Harbour EXE gerade blockiert

curl_setopt($ch, CURLOPT_CONNECTTIMEOUT, 1);

// Wie lange darf die KI maximal brauchen?

curl_setopt($ch, CURLOPT_TIMEOUT, 30);

$response = curl_exec($ch);

$httpCode = curl_getinfo($ch, CURLINFO_HTTP_CODE);

if (!curl_errno($ch) && $httpCode == 200) {

// Erfolg! Diese Instanz war frei und hat rechtzeitig geantwortet

$success = true;

curl_close($ch);

break; // Schleife verlassen

}

// Wenn wir hier sind, war der Port blockiert (Timeout) oder offline.

// PHP geht sofort in den nächsten Schleifendurchlauf und testet den nächsten Port!

curl_close($ch);

}

if ($success) {

// Antwort an das Javascript Front-End schicken

header('Content-Type: application/json');

echo $response;

} else {

// Alle 4 Instanzen waren komplett ausgelastet

http_response_code(503); // Service Unavailable

echo json_encode(["error" => "Alle Hotel-Assistenten sind gerade im Gespräch. Bitte in 5 Sekunden nochmal versuchen."]);

}

?>

Dear Antonio,

first of all, I wanted to ask if you have continued working on the AIOS project recently. I am following it with great interest, especially the agent-based architecture and the reasoning loop.

In parallel, I have been experimenting with integrating the core ideas of AIOS into a stateless microservice environment (WinHotel), where Harbour remains the deterministic backend.

What I find particularly interesting is the possibility of using AIOS not as a standalone system, but as an orchestration layer on top of existing services. In this approach:

This would allow a clean separation between deterministic logic and AI-driven orchestration, which seems especially relevant for real-world deployments under EU constraints (GDPR, AI Act).

From a technical perspective, I am considering embedding the core reasoning loop into a simple microservice endpoint (e.g. POST /aios), removing the dependency on the HIX web layer and integrating it into an existing server architecture.

My question is:

Do you see AIOS evolving in this direction — as a more modular, embeddable orchestration engine — or do you prefer to keep it more tightly integrated with HIX?

I believe this could open the door for broader adoption in environments that already rely on microservices and strict separation of concerns.

Best regards,

Otto

Dear Otto,

These days I am fully inmersed in the Claude Code and vibe coding experience

It has been such a profound, impactful, and deep and truly transformative experience for me.

I have built Harbour Builder in just one week! just "talking" to Claude Code! Its such an enormous power what it delivers, that really transforms you totally. Running on Windows, Mac, Linux with no effort at all. I want to explore Android, IOS, the web all of it again using Claude Code. I am so thankfull for this inmense gift for us that enjoy computing.

In fact, Claude Code source code has been leaked and I would like to study it and to have a little understanding about such great and impressive software tool.

What I mean is that after this process, we will not be the same ones. Using AI at this level really transforms us. We think and analyze in a different way. So much can be done, so easily with almost no effort at all.

At this point, I thank God for giving us this wonderful oportunity to deeply evolve. My computing experience before Claude Code is the past. Now I see software development with a different light more and more :)

I have no plans except to embrace this new way of "reasoning" and "learn" how to go with it. Where will it take us ? I really don't know it, but it is such a wonderfull and revealing experience :idea: Thanks God for this inmense gift to humanity.

Dear Antonio,

Thank you. I too have been using Claude Code and can confirm: the vibe coding approach is exactly as powerful as you describe. Have you tried Obsidian?. The combination of a 'second brain' for personal knowledge and an AI coding assistant for execution feels like a genuinely powerful pairing.

Best regards, Otto